Table of Contents

Introduction

The world’s most demanding computing challenge. As the engine of NVIDIA data center platform, NVIDIA A100 40GB Tensor core GPU deliver unique acceleration at any scale for AI, data analytic, and HPC to meet. A100efficiently scale up thousands of GPUs or use new multi-instance GPU (MIG) technology. Partition into seven isolated GPU instances to accelerate workloads of all sizes. In addition, the A100’s third-generation Core Tensor technology further accelerates accuracy levels for various workloads, accelerating viewing time and also time to market.

GPU Specifications

- Bracket-Double full height with

- Cuda-Cores6912

- GPU heat sink-Liabilities

- Interface-PCIe 4.0 x16

- Memory Bandwidth: 1.55 GB/s

- Memory Size-40GB

- Memory Type-ECC HBM2

- Power Connectors: 8-pin CPU power connector

- Single precision floating point performance-19.5 TFLOPS

- Pivot tensioner cores-432

Accelerating the Most Critical Work of Our Time-Nvidia A100 40Gb

Nvidia a100 40Gb Tensor Core GPU delivers unprecedented acceleration at any scale for AI, data analytics, and high-performance computing (HPC) to meet the world’s most demanding computing challenges. As the engine of the NVIDIA data center platform, A100 can efficiently scale to thousands of GPUs or, with NVIDIA Multi-Instance GPU (MIG) technology, partition into seven GPU instances to accelerate workloads of all sizes. And also, third-generation tensioner cores accelerate every precision for various workloads, speeding time to view and market.

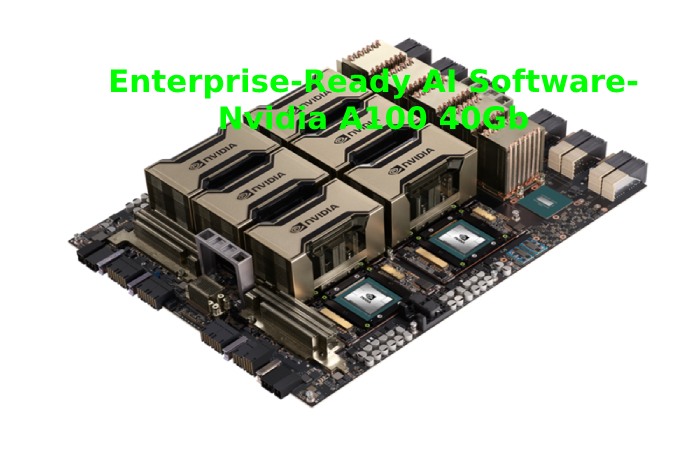

Enterprise-Ready AI Software- Nvidia A100 40Gb

The NVIDIA EGX™ platform includes optimized software that delivers accelerated computing across the infrastructure. With Nvidia a100 40Gb AI Enterprise, companies can access a comprehensive suite of cloud-native AI and data analytics software optimized, certified, and also compatible with NVIDIA to run on VMware vSphere with NVIDIA-certified systems. In addition, NVIDIA AI Enterprise includes key NVIDIA technologies that enable rapid deployment, management, and scaling of AI workloads in the modern hybrid cloud.

Deep learning training

AI models are exploding in complexity as they take on next-level challenges like precise conversational AI and deep recommendation systems. Training them requires enormous processing power and scalability.

NVIDIA A100’s third-generation tensioning cores with tensioning float accuracy (TF32) deliver up to 20 times the performance of the previous generation with zero code changes and an additional 2x increase with mechanical mixed precision and FP16.When combined with third-generation NVIDIA NVLink, NVIDIA NVSwitch, PCI Gen4, NVIDIA Mellanox InfiniBand, and NVIDIA Magnum IO software SDK, it is possible to scale to thousands of A100 GPUs. In addition, large AI models such as BERT can be trained in just 37 minutes on a 1024 A100 cluster, delivering unprecedented performance and scalability.

NVIDIA’s leadership in training remains demonstrated in MLPerf 0.6, the industry’s first benchmark for AI training.

Deep Learning Inference- Nvidia A100 40Gb

The A100 introduces innovative new features to optimize inference workloads. Provides unprecedented versatility by accelerating a full range of accuracy, from FP32 to FP16 to INT8 to INT4. Multi-instance GPU (MIG) technology allows multiple networks to operate simultaneously on a single network.

A100 GPU for optimal utilization of computing resources. And also, structural dispersion support delivers up to twice the performance plus other inference performance gains of the A100.

NVIDIA already delivers market-leading inference performance, as demonstrated in an overall sweep of MLPerf Inference 0.5, the industry’s first benchmark for inference. The A100 offers 20 times more performance to extend that lead further.

High-Performance Computing

To unlock next-generation discoveries, scientists are looking for simulations to better understand complex molecules for drug discovery. Also, physics for potential new energy sources and atmospheric data to better predict and prepare for extreme weather patterns. Nvidia a100 40Gb introduces dual-precision tensor cores, providing the most significant milestone since the introduction of dual-precision computing on HPC GPUs. It allows researchers to reduce a 10-hour double-precision simulation running on NVIDIA V100 Tensor Core GPUs to just four hours on A100. HPC applications can also take advantage of TF32 precision on A100 tensor cores to achieve up to 10 times. Higher performance for single-precision dense array multiplication operations.

High-Performance Data Analysis- Nvidia A100 40Gb

Customers need to be able to analyze, visualize and also convert bulk data sets into information. But scalable solutions often get stuck, as these data sets remain scattered across multiple servers. Accelerated A100 servers deliver the computing power needed, along with 1.6 terabytes per second (TB/s) of memory bandwidth and also scalability with third-generation NVLink and NVSwitch, to handle these massive workloads. Combine with Nvidia a100 40Gb Mellanox InfiniBand, the Magnum I/O SDK, and the RAPIDS suite of open source software libraries, including the RAPIDS Accelerator for Apache Spark for GPU-accelerated data analytics. The NVIDIA data center platform is the only one capable of accelerating these massive workloads to unprecedented levels of performance and efficiency.

Conclusion

The Nvidia a100 40Gb is part of NVIDIA’s complete data center solution. So that incorporates building blocks across hardware, networks, software, optimized AI libraries and models, and NGC applications. Representing the most powerful end-to-end AI and HPC platform for data centers enables researchers to deliver. Real-world results and deploy solutions in production at scale. Because our above helpful article for all and everyone.